The maximum likelihood estimation (MLE) is a general method to find the function that most likely fits the available data; it therefore addresses a central problem in data sciences. Depending on the model, the math behind MLE can be very complicated, but an intuitive way to think about it is through the following thought experiment….

Category: published

The binomial distribution

The binomial distribution is used when there are n (a fixed number) independent trials with two possible outcomes (“success” and “failure”) with a probability that is constant. With 10 tosses of a fair coin, what is the probability of getting 7 heads? \[Prob = dbinom(7,10,0.5) = 0.1171875\] And what is the probability of getting exactly…

Fisher’s exact test

Fisher’s exact test is used to compare counts and proportions between groups when small samples of nominal variables are available. It assumes that the individual observations are independent, and that the row and column totals are fixed, or “conditioned.” An example would be putting 12 female hermit crabs and 9 male hermit crabs in an…

The Poisson distribution

A sports team scores 84 points in 21 games, so the average score is 4 points per game. What is the probability that it scores 1 point per game? Or 6 points, or more than than 4? The answer is in the Poisson distribution, where variance = mean, denoted as $\lambda$. The answer to the…

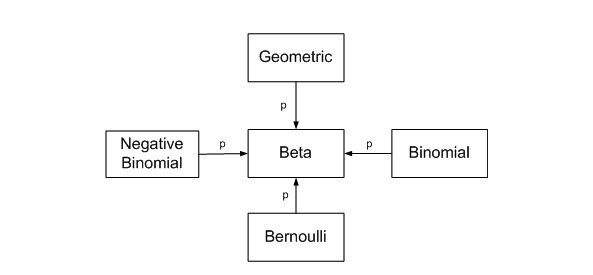

The beta distribution

Definition Using the mean Using ranges for the prior Informative vs. uninformative priors Mean, median, variance Highest Density Interval References Addenda Definition The Beta distribution represents a probability distribution of probabilities. It is the conjugate prior of the binomial distribution (if the prior and the posterior distribution are in the same family, the prior and posterior…

RMarkdown to WordPress

One option is to create your markdown document in R, using \(\LaTeX\), knit to html, then copy the file content into WordPress and add at the top the following javascript code: This will load images into the blog post itself and preserve the \(\LaTeX\) formatting. I prefer to use the MathJax-LaTeX plugin, and use the…

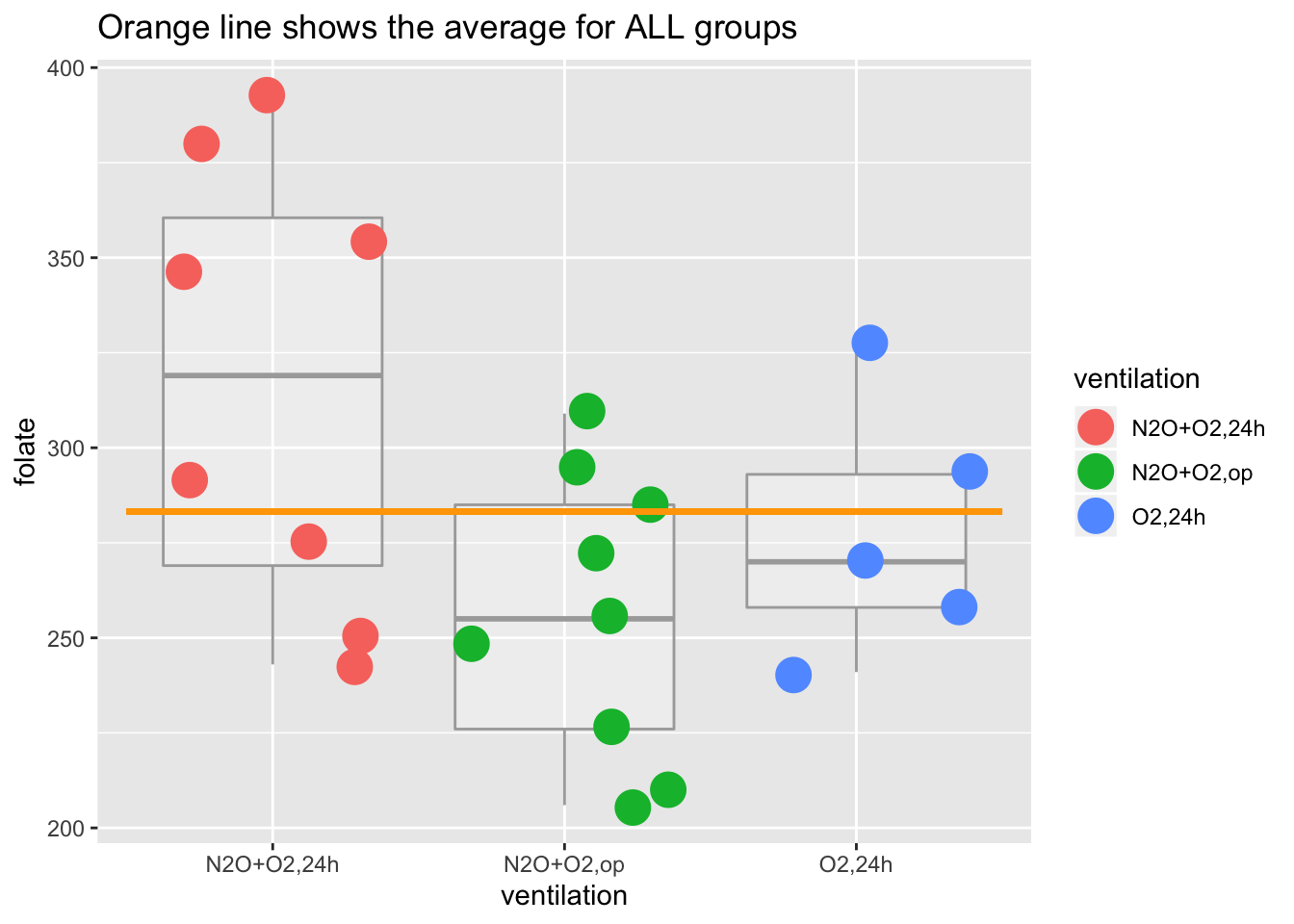

One-way ANOVA

For a simple exercise to understnad one-way ANOVA, I will use the data set red.cell.folate from the package ISwR (see the book “Introductory Statistics with R” by Peter Dalgaard) but will also generate our own data. And now (drum roll) … it’s time to run the ANOVA Let’s look at what this ANOVA table means….

Information criteria: AIC, AICc, BIC

Information theoretic approaches view inference as a problem of model selection. The best model is the one that has the least information loss relative to the true model. Information criteria (IC) are estimates of the Kullback Leibler information loss, which cannot be calculated in real life models. The best known IC is the Akaike IC…

Does the p-value overestimate the strength of evidence?

Thom Baguley points to the standardized or minimum LR (p381) to answer this question. The minimum LR represents a worst case scenario for the null in that it compares the LR for against the MLE of the observed data, i.e. the most likely (strongest) possible hypothesis supported by the data, and is defined as …

Inference via likelihood

The likelihood school affirms that likelihood ratios are the basic tool of inference. The likelihood is the (conjugate) probability of observed data D, conditional on the hypothesis A being true. Given two hypotheses, A and B, it is meaningless to assess evidence except by comparing the evidence favoring hypothesis A over hypothesis B….